The Era of Subsidized AI Model Usage is Over, the IPOs are coming

Hey everyone,

The AI industry is approaching an inflection point that will reshape the priorities of AI model companies and the entire space. There are four interconnected themes I want to walk through today, and they all converge on a single conclusion: the era of subsidizing AI model usage is coming to an end.

Here’s what I cover in this article:

Anthropic is taking over the enterprise — but the curse of the best model is real

OpenAI is losing the enterprise race to Anthropic and facing structural problems heading into its IPO

The era of subsidized AI model usage is ending as both companies prepare for public markets

The IPO race: who lists first matters more than most people realize

Let’s get into it.

Anthropic Is Taking Over Enterprise: And the Curse of the Best Model Is Coming for Them

Anthropic announced that its revenue had surpassed $30 billion, up from $9 billion at the end of 2025. That’s more than tripling in roughly four months. Anthropic has now supposedly surpassed OpenAI’s run-rate revenue of approximately $25B.

The enterprise composition is what separates Anthropic from the rest. Approximately 80% of revenue comes from business customers. The number of customers spending over $1 million annually has doubled to more than 1,000, up from 500+ where it was just 2 months ago. Business subscriptions to Claude Code have quadrupled since the start of 2026.

And then there’s Mythos. Just a few days ago, Anthropic announced Claude Mythos Preview - a new general-purpose model that sits in an entirely new tier above Opus. The draft described it as “by far the most powerful AI model we’ve ever developed” and said it is “very expensive for us to serve, and will be very expensive for our customers to use.”

The benchmarks are quite telling: 93.9% on SWE-bench Verified (vs. Opus 4.6’s ~80.9%), 77.8% on SWE-bench Pro, 82% on Terminal-Bench 2.0, 97.6% on USAMO 2026, and 83.1% on CyberGym vs. Opus 4.6’s 66.6% — a 16.5 percentage-point jump on cybersecurity tasks. On Anthropic’s internal zero-day exploit benchmark, Opus 4.6 had a near 0% success rate at autonomous exploit development. Mythos succeeded 181 times out of several hundred attempts on the same Firefox vulnerability task. It also found thousands of zero-day vulnerabilities across every major operating system and browser, including a 27-year-old bug in OpenBSD and a 16-year-old bug in FFmpeg that automated testing had missed across 5 million test runs.

Mythos is not being made generally available because Anthropic wants to first roll it out to selected companies because of cybersecurity risks. It’s deployed through Project Glasswing to 12 partner organizations (Amazon, Apple, Broadcom, Cisco, CrowdStrike, Linux Foundation, Microsoft, Palo Alto Networks, and others) plus about 40 additional organizations, with Anthropic committing $100 million in usage credits. While I am not dismissing any cyber risks that a model like this could bring, it is also convenient that now model providers will »release« these models to a small group of companies, because the reality is that with their current compute, they can’t even serve Opus 4.6 to their user base let alone Mythos, which is even more expensive to run. The current Mythos Preview, for which these companies got access, is around 5x more expensive than Opus 4.6 after the initial $100M credit commitment from Anthropic based on their pricing. It is also rumored that OpenAI will also “release” their newest model in a similar fashion, again citing cybersecurity risks.

There has been a lot of frustration from Claude users lately, as many have started to hit their rate limits much faster in their subscription plans, as Anthropic is having to manage this surge in demand with the amount of compute that they have.

This is what I call the Inference Trap, and both OpenAI and Anthropic have now been caught in it.

The pattern is simple: build the best model → users surge → inference compute explodes → you either throttle users, raise prices, or cannibalize training compute. OpenAI experienced it during the Ghibli moment in March 2025, when ChatGPT gained 1 million new users in a single hour and 100 million signups in a week. Sam Altman admitted they were “forced to do a lot of unnatural things,” specifically borrowing compute capacity from OpenAI’s research division and slowing down the release of new features.

Anthropic is living through its own version right now. In March 2026, the company experienced five major platform outages in a single month. Claude Code users reported burning through 5-hour sessions in under 90 minutes. The problem for Anthropic is that if you are a model provider in this AI race, you don’t want to cannibalize training compute, as it means that you can lose the race for the next model.

This brings me to another point I want to make: pricing increases on frontier AI models are inevitable. When Anthropic eventually deploys Mythos-class models at scale, the inference cost per query will be higher than Opus. And they already can’t serve Opus at current demand levels without throttling. The math only works if prices go up, or if the compute infrastructure grows fast enough to meet demand, which it can’t in the short term.

Moving to OpenAI.

OpenAI seems to be losing the Enterprise Race And Heading Into an IPO with some headwinds

While Anthropic is sprinting ahead on enterprise revenue, OpenAI is dealing with a set of problems that are becoming hard to ignore.

The revenue gap has flipped. A year ago, OpenAI was at roughly $6 billion ARR, and Anthropic was at $1 billion. The gap looked huge. Today, Anthropic is at $30 billion, and OpenAI is at $25 or similar to Anthropic, but the pace of growth is slower. Anthropic added roughly $21 billion in net new annualized revenue in just three months. OpenAI’s enterprise business now makes up 40% of revenue (up from ~30% last year) and is “on track to reach parity with consumer by the end of 2026” — but Anthropic has been enterprise-first from the start, with 80% enterprise revenue and structurally higher retention.

To add to this, SensorTower data now show that ChatGPT’s monthly active users in the US have started to fall slightly, adding to the headwinds.

Even without this data, we have been waiting for quite some time for OpenAI to make the update of reaching 1 billion weekly active users, as the 800M mark was announced 6 months ago. Based on this users growth has slowed down.

The funding structure also isn’t perfect. OpenAI closed a $122 billion round at an $852 billion valuation at the end of March— the largest private funding round in history. But the structure is telling. Amazon committed $50 billion, but only $15 billion arrived as upfront cash. The remaining $35 billion is conditional, tied to milestones that some indicate may include achieving certain AI capability thresholds or pursuing an initial public offering by the end of 2026.

SoftBank pledged $30 billion structured in three equal tranches of $10 billion each, arriving in April , July, and October. SoftBank’s structure essentially assumes a liquidity event within that window. About $3 billion came from retail investors through bank channels, and OpenAI was included in ARK Invest ETFs.

In other words, a significant portion of OpenAI’s headline $122 billion raise is conditional on an IPO actually happening. This means OpenAI is under pressure to go public regardless of whether the timing is optimal. There are now reports from The Information that Altman and OpenAI’s CFO are on different sides over the IPO, as Altman is pushing for an IPO this year, while the CFO believes OpenAI is not ready yet. OpenAI has already denied this, but it's not expected that any company would confirm such rumors, even if they were true.

The profitability picture is the key thing. According to different reports, OpenAI’s gross margins sit at approximately 40%, constrained by variable compute costs. The company is generating +$2-3 billion per month but losing +$14 billion per year. Reports of internal documents project that compute costs will reach $121 billion by 2028, with a cumulative loss trajectory that doesn’t reach breakeven until 2029-2030. Compare this to Anthropic, which projects positive free cash flow by 2027-2028 while spending roughly 4x less on training.

OpenAI also has an alternative to Claude Code called Codex, but adoption there, although growing, doesn’t seem to be at the same pace as Claude Code. It’s telling that OpenAI is even offering users more token usage, while Anthropic is limiting it.

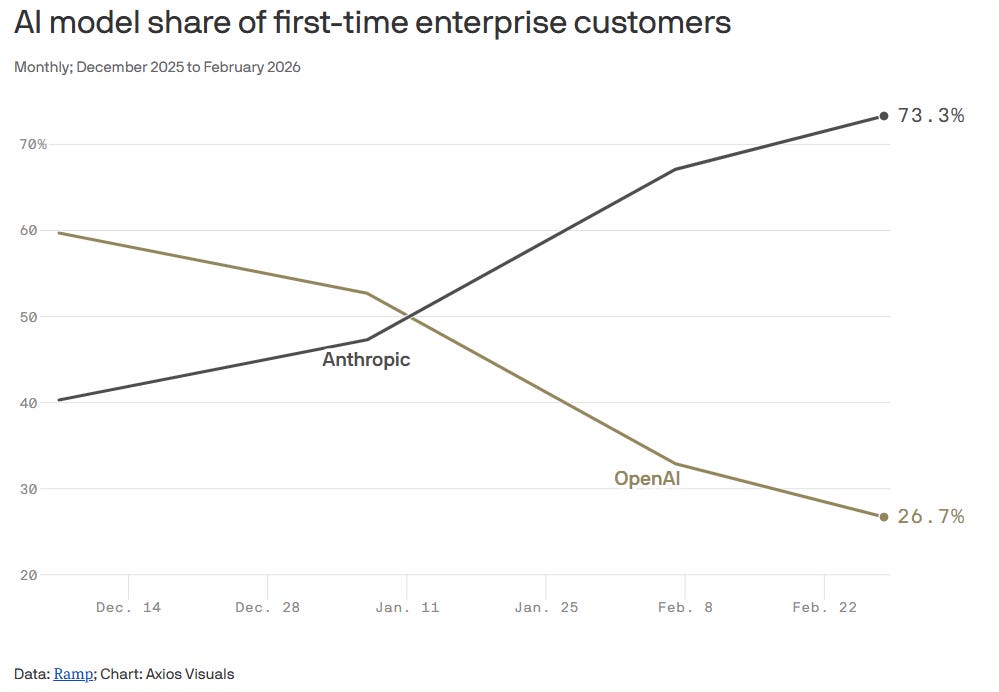

As this data from Ramp shows, the AI model share of first-time enterprise customers has heavily tilted towards Anthropic in the last few months:

The key moat that OpenAI has is the ChatGPT brand, which, as a first mover, created a verb similar to Google when it comes to consumers. In the last months, however, Claude and Anthropic have become “the verb” when it comes to enterprises and AI use cases for work. On the consumer end, OpenAI will probably have to shift hard towards an ad-supported business model to get some revenue from its big free user base. Building an efficient ad platform is much more complex than most think and requires time. At the same time, OpenAI is trying to stop Anthropic in the enterprise market, but so far, it doesn’t seem to be working as Anthropic is capturing the market at a faster pace. The question is whether OpenAI’s strategy of trying to capture both markets at once and “doing everything” is really the right one. I would argue that it is not. Now you even have Meta entering the AI arena again, with its first AI model since the formation of Meta's superintelligence unit. While their model is not SOTA, there are specific use cases where it is very competitive. Meta focused on use cases like health, social media, games, and shopping. This pattern will become more dominant in the coming years as the AI model market matures and you see model specialization rather than just general models. In this environment, the importance of having a narrow focus becomes even bigger.

According to The Information, OpenAI is now communicating to investors that they believe one of their important advantages going forward vs Anthropic is in the availability of compute. OpenAI said it believes Anthropic had 1.4 GW of capacity at the end of last year, while OpenAI had 1.9 GW. But OpenAI said it plans to ramp its capacity more steeply, with total gigawatts in the mid-single digit range at the end of this year and more than 10 GW in 2027.

In contrast, it believes Anthropic will have 3 to 4 GW in 2026 and 7 to 8 GW by the end of 2027. While I agree that availability of compute is a big factor going forward, I believe the main one and even more important is inference economics and the costs of serving the models to your clients. As users shift workloads to production, availability and reliability become key. Nobody wants to have a product that is unstable and sometimes works great, but other times is not available.

The End of Subsidized AI Model Usage

Both Anthropic and OpenAI are preparing for IPOs. And an IPO changes everything about how an AI company thinks about compute costs.

When you’re private and burning venture capital, you can subsidize inference. You can run models at a loss. You can offer $20/month unlimited plans that cost you +$100/ month to serve. You can double the rate limits as promotions. You can hand out credit packages. The goal is growth at all costs, because the next funding round values you on revenue, not margins.

When you’re public, the scrutiny shifts to unit economics. Gross margins, operating margins, cash burn trajectory, path to profitability — these become the metrics that determine your stock price. An S-1 filing forces you to disclose all of this in audited detail.

Both OpenAI and Anthropic are probably operating at approximately 40% gross margins, constrained by the variable cost of running inference. And while no company will show net profit as they are projected towards the end of 2030, the gross margin will be something that investors will particularly keep an eye on, especially the gross margin on inference. While the margin profile math works on API usage, the one in the subscription packages is still often subsidized by the model companies, and this is something they will look to tweak in the coming months before they file their S-1s.

This has a ripple effect across the entire supply chain industry. For the past three years, the question has been: “Which chip delivers the most FLOPS?” The answer was always Nvidia, and companies paid whatever Nvidia charged because performance was the bottleneck, and money was abundant.

Going forward, the question becomes: “Which chip gives me the cheapest tokens and the best total cost of ownership (TCO)?” It’s not even about watts anymore, as you can see from recent podcast comments from Nvidia’s Jensen and Google Sundar — it’s about cost-per-token, because that’s what directly determines your gross margin as a public company, and that is what investors will be laser focused on. This shift also means the end of subsidizing usage in these subscription packages, with either usage limits or higher prices. We will also see even more resources being focused on software optimizations to run the model. Savings on memory and getting more from existing hardware will be the focus in the coming months for both labs, as they are constrained by compute.

Both of these companies also need to make the hard math of the IPO being the “last” funding round, and after the IPO, have enough capital that will be able to support their growth and cash burn for the coming years. Issuing additional stock for raising capital once you are a public company is never looked at positively by the market, so nobody wants to go down that route.

Anthropic is uniquely well-positioned here because it runs Claude on a diversified hardware stack across three suppliers: Nvidia GPUs, Google TPUs, and Amazon Trainium. This gives it real negotiating leverage and the ability to route workloads to whichever chip offers the best price-performance for each model tier. The company just announced a deal with Google and Broadcom for approximately 3.5 gigawatts of next-generation TPU capacity starting in 2027. This is on top of its existing AWS Trainium partnership and Nvidia GPU deployments. It is worth noting that AWS’s CEO just mentioned in an interview on CNBC yesterday that all of Anthropic’s AI models were trained on Amazon Trainium (even Mythos).

OpenAI, by contrast, has been more dependent on Nvidia through its Azure partnership with Microsoft, though it has been diversifying toward custom silicon.

The IPO Race: Who Lists First Gets the Biggest Check

There’s one final dynamic that ties all of this together: both Anthropic and OpenAI know that whoever goes public first has a significant advantage, and the window for both is narrowing. On top of those, SpaceX (which now includes xAI) is also racing towards a +$1T IPO. OpenAI is targeting a +$1T IPO, Anthropic just closed a funding round valued at $380 billion, but because of the surge in usage and revenue is already valued at around $500-$700 billion in secondary listings, so the IPO could be in the $800B-$1T range as well.

Between these three companies alone, we’re looking at potentially +$200 billion in capital being raised from public markets within a 6-12 month window. That’s an enormous liquidity event. For context, the entire US IPO market raised approximately $33 billion in 2024. Even in the hot 2021 market, total US IPO proceeds were around $140 billion.

This is why the race to go first matters so much. The first to market captures the freshest investor capital and sets the valuation benchmark. The second has to compete for the same institutional allocation. The third might struggle if the market has indigestion from the first two.

OpenAI’s board is reportedly concerned that if Anthropic lists first, it could set a valuation benchmark that makes OpenAI’s $1 trillion target look stretched — especially now that Anthropic has higher revenue, better enterprise concentration, and a more credible path to profitability. On the other hand, if OpenAI lists first, it establishes itself as the “AI category-defining IPO” and benefits from a first-mover premium in public market pricing.

Both companies know this. Both are preparing in parallel. And both are racing against time, because every month that passes, compute costs pile up, margins need to improve, and the public market window could shift with macro conditions.

Summary

We are entering a new key period in AI where unit economics take front stage. At the same time, I expect we will see a rapid pace of software optimizations to more efficiently serve these models in the coming months as AI labs put their best talent towards solving this task because it has now become the most important thing that is limiting growth and profitability. The software optimization will focus on resolving key bottlenecks, such as memory (KV cache, context window) and wafer availability. Model distillation and the trend toward smaller models will also grow faster than before because of this. If I were to speculate, the hardware companies might have a “less golden” time than the era they have had so far, while cloud providers might benefit the most as these software optimizations mean that they get more juice out of their existing infrastructure, while demand for compute still keeps on surging, because of wider adoption of AI.

Until next time,

Next week, we are publishing an article on some key developments in the AI space when it comes to Meta and Amazon, exclusive for paid subscribers. If you are not yet a paid subscriber, consider signing up.

Thank you!

Disclaimer:

I own Google (GOOGL), Amazon (AMZN), Microsoft (MSFT), Meta (META) stock.

Nothing contained in this website and newsletter should be understood as investment or financial advice. All investment strategies and investments involve the risk of loss. Past performance does not guarantee future results. Everything written and expressed in this newsletter is only the writer’s opinion and should not be considered investment advice. Before investing in anything, know your risk profile and if needed, consult a professional. Nothing on this site should ever be considered advice, research, or an invitation to buy or sell any securities.

The Anthropic growth numbers here are staggering and they connect directly to the Palantir valuation debate. Anthropic hit $30B ARR in roughly 5 years. Palantir hit $5B in 20 years. Both companies sell AI integration to enterprises, but Anthropic is doing it through APIs and agents, not forward-deployed engineers on-site.

I just published a deep dive on PLTR showing 71x revenue, roughly 300x owner earnings after normalizing for SBC and taxes. Even in the most generous Monte Carlo scenario (45% revenue CAGR, 48% margins), the stock doesn't even come close $136. If you layer in the competitive threat from Anthropic commoditizing the orchestration layer, the bear case gets stronger.

The pricing discipline angle is interesting though. If AI model costs rise, does that make Palantir's "we integrate any model" positioning more or less valuable?

You're completely right at the micro level. My maths might be completely out on this but I think if you were to calculate the API cost against the rough limits for Claude/ChatGPT/Gemini you get something like $40, $32, $19.50 if someone was to max out their credits daily for a whole month (assumes a 3:1 input:output token rate at 1M tokens).

At a macro level it's unlikely to be true that everyone is maxing out their limits for each session so they are likely to still be making money on each subscription but come IPO this is exactly what will be analysed in excruciating detail to understand what non-API subsidisation rate is likely to be. The move by Claude recently to almost charge a "seat cost entry" and then all credits are paid for on top for Enterprises further proves your points correct above moving towards IPO levels of self-sufficiency.

The huge risk will be the continuing supply-chain risk designation that they'll need to fully resolve pre-IPO. The reading of the situation I have is that Anthropic will be baking in more safety into their models at pre-training which the DoD won't like.